Mechanical Kubler: Visual Paths Through Time

I finally got the chance to push through a little idea about walking through visual time with a new Twitter bot I’m calling @MechaKubler.

.](https://mechanical-kubler.github.io/assets/images/88735e8915ee1900340d62ec278018bf.gif)

A pathway generated by Mechanical Kubler.

Inspired by Google Cultural Institute’s “X Degrees of Separation”, I was curious to see if it was possible to recreate that app by hand using a more focused collection of works, and constraining the kinds of paths that would get drawn between two given images. Was it possible to make this path move only forward in time? Or only backwards? To only consider a certain set of objects by type or nationality? The idea had been gnawing at me for some time.

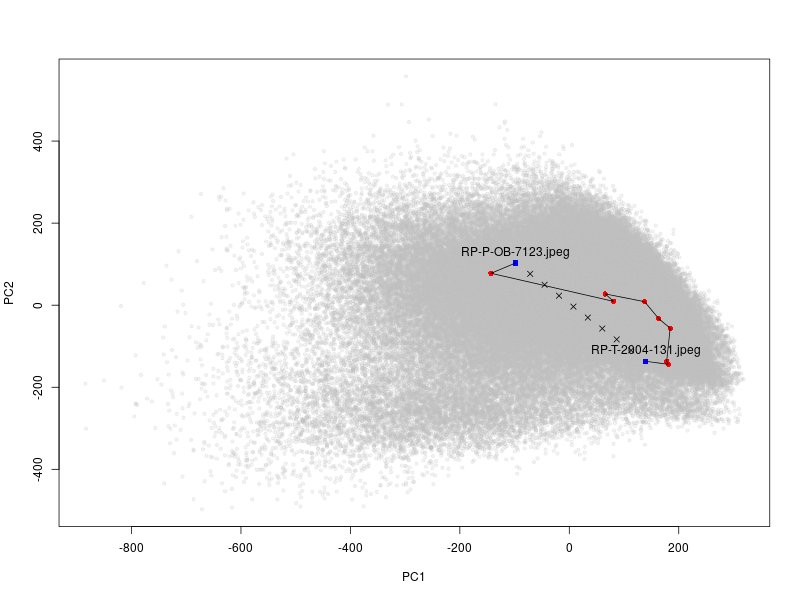

Once an hour, @MechaKubler assembles an 8-image-long path between two objects from the Rijksmuseum, trying to find pictures that are roughly evenly separated across an expanse of visual similarity space. I used the penultimate max pooling layer of the pre-trained VGG-16 convolutional neural network1 to produce this space of multidimensional features (an involved way of saying “a list of 512 numbers per image”) for over 200,000 images of artworks in the Rijksmuseum collections.

Rather than just find the closest object at hand, it will take a chronological path, expressly moving either forwards or backwards in time as it traverses this visual space. Hence the homage to George Kubler, who considered the seriation of visual form through history in his 1962 Shape of Time. One of his core arguments was that there exist “prime objects”: ideal solutions to visual problems that artists then manifest through physical variants. This not unlike how @MechaKubler works. Using a R package I wrote to generate nearest-neighbor paths through numeric matrices, it identifies several ideal points sitting on a line evenly spaced between two randomly-chosen objects. These ideal points in the VGG-18 feature space can’t be directly translated back into images2 - alone, they’re just separate lists of 512 numbers. But it is possible to search through the real objects to find those whose own 512-number long feature vectors are very close to the ideal points.

Visualizing the ideal linear path versus the less-straight path of real objects. (Note that the objects are actually much closer to the ideal path than they appear in this two-dimensional projection of the 512-dimensional space.)

So while it’s impossible to see what these “prime object” points look like, we can get a glimpse of that path via real objects acting as surrogates for those points.

“Visual similarity” in @MechaKubler careens between the sublime and the absurd. It gets hung up on things like large book gutters or oval frames. Its pathways get snared in eddies of preparatory sketches and exhibition installation photos. But it also snags onto serendipitous resonances of pose like that between Winter and Salome here. Also critical: Kubler was very much interested in causality. @MechaKubler is very much not. It knows nothing about artists’ circumstances or location or community or patrons, etc.

So this bot can’t make individual art history arguments about the objects it finds. But it does, I think, have something to say about how we define prime objects in our day-to-day art history. Like any humanistic discipline, we’re continually torn between abstraction and specifics. It is difficult to render abstract arguments without enough images to materialize that argument through visible examples. Perhaps more than historians or literary studies, our specifics are obstreperously present: we don’t tend to quote excerpts of images like we can lines from books or pages from archival documents. And so we end up doing a kind of art historical nearest-neighbor search: “I have this idea, now where are the images that will best exemplify it?” We trawl through museums and photo archives and image libraries and exhibition catalogs to find those objects that come closest to those untouchable “prime images” that are the perfect solutions for particular problems; and that are the perfect ingredients in our art historical recipes.

Many digital art history projects take this process of finding to be their main goal: how do we catalog, sort, and dsiplay images in the right ways to make individual ones findable? It’s rare that they purposefully call attention to this real/ideal tension that drives art historians on the image hunt in the first place. No matter how fascinating our narrative ideal objects are, we constantly need to negotiate with and against the images actually availble to us; a corpus constrained by everything from our foundational image-collecting habits and scholarly canons to things as mundane as reproduction rights.

So anyway, take some time to check out the weird paths it finds. I may experiment a bit in the future with adding some other kinds of constraints, like paintings-only or ceramics-only paths. Also the actual feature space could be drastically improved by using a fine-tuned CNN like the Replica project, so I may give that a go at some point And if you like @MechaKubler you should also take a look at some forebears like Ryan Baumann’s search for close matches across this corpus using Pastec, John Resig’s Ukiyo-e visual search engine, and the Barnes Collection’s computer vision experiments. Hopefully this can encourage us to revisit Kubler’s ideas about how to write histories of visual morphologies even in the absence of social context - ideas all the more important in an age where more images are being looked at by machines than by humans. It’s definitely prompted me to look more closely at more objects than I’ve done in some time.

-

Karen Simonyan and Andrew Zisserman, “Very Deep Convolutional Networks for Large-Scale Image Recognition,” ArXiv:1409.1556 [Cs], September 4, 2014, http://arxiv.org/abs/1409.1556. ↩

-

Well, not without a lot of work. For more on what it entails to reverse-engineer new pixels out of feature vectors, see Chris Olah et al., “The Building Blocks of Interpretability,” Distill 3, no. 3 (March 6, 2018): e10, doi:10.23915/distill.00010. ↩